Welcome to DeeR’s documentation!¶

DeeR (Deep Reinforcement) is a python library to train an agent how to behave in a given environment so as to maximize a cumulative sum of rewards (see What is deep reinforcement learning?).

Here are key advantages of the library:

- You have access within a single library to techniques such as Double Q-learning, prioritized Experience Replay, Deep deterministic policy gradient (DDPG), Combined Reinforcement via Abstract Representations (CRAR), etc.

- This package provides a general framework where observations are made up of any number of elements (scalars, vectors or frames).

- You can easily add up a validation phase that allows to stop the training process before overfitting. This possibility is useful when the environment is dependent on scarce data (e.g. limited time series).

In addition, the framework is made in such a way that it is easy to

- build any environment

- modify any part of the learning process

- use your favorite python-based framework to code your own learning algorithm or neural network architecture. The provided learning algorithms and neural network architectures are based on Keras.

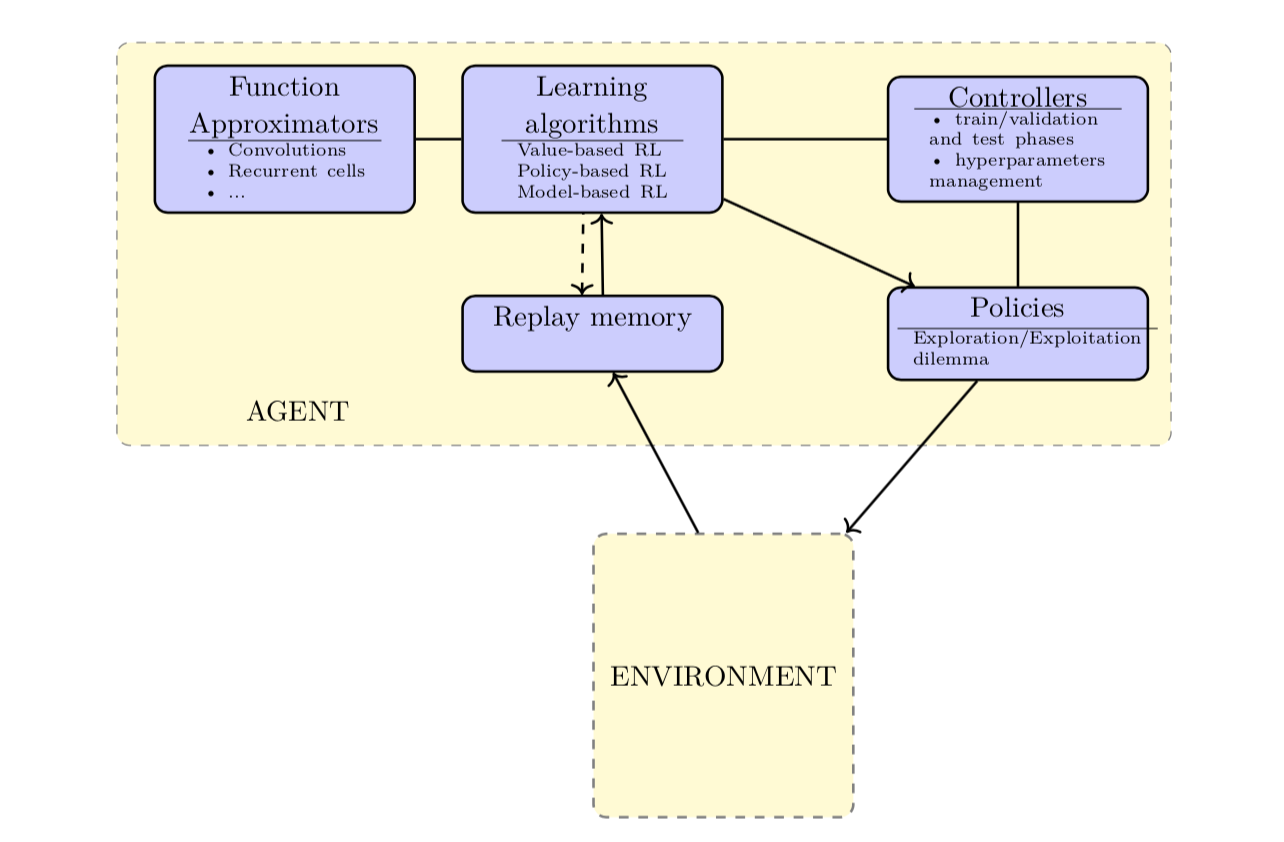

| Figure: | General schema of the different elements available in DeeR. |

|---|

It is a work in progress and input is welcome. Please submit any contribution via pull request.

What is new¶

Version 0.4¶

- Integration of CRAR that allows to combine the model-free and the model-based approaches via abstract representations.

- Augmented documentation and some interfaces have been updated.

Version 0.3¶

- Integration of different exploration/exploitation policies and possibility to easily built your own.

- Integration of DDPG for continuous action spaces (see actor-critic)

- Naming convention for this project and some interfaces have been updated. This may cause broken backward compatibility. In that case, make the changes to the new convention by looking at the API in this documentation or by looking at the current version of the examples.

- Additional automated tests

Version 0.2¶

- Standalone python package (you can simply do

pip install deer) - Integration of new examples environments : toy_env_pendulum, PLE environment and Gym environment

- Double Q-learning and prioritized Experience Replay

- Augmented documentation

- First automated tests

Future extensions:¶

- Several agents interacting in the same environment

- …

How should I cite DeeR?¶

Please cite DeeR in your publications if you use it in your research. Here is an example BibTeX entry:

@misc{franccoislavet2016deer,

title={DeeR},

author={Fran\c{c}ois-Lavet, Vincent and others},

year={2016},

howpublished={\url{https://deer.readthedocs.io/}},

}

User Guide¶

API reference¶

If you are looking for information on a specific function, class or method, this API is for you.